The Collapse of Deterministic Tapeout Economics

In Q1 2026, three major fabless semiconductor firms quietly revised their product roadmap presentations to investors. Instead of committing to fixed tapeout dates for next-generation ASICs, they presented probability distributions with confidence intervals. The shift was not cosmetic. It reflected a fundamental recalibration of how design complexity, process variability, and capital deployment interact when AI agents manage tens of thousands of design iterations simultaneously and digital twins simulate fabrication outcomes before a single wafer enters the cleanroom. The firms that made this change reported average cycle time reductions of 23 percent and first-pass silicon success rates above 91 percent. The firms still anchored to Gantt charts and deterministic milestones are now explaining to their boards why competing products reached market six months earlier.

This divergence is not about adopting new software. It is about recognizing that when generative design agents explore millions of potential architectures in parallel and fabrication digital twins ingest real-time sensor data from production lines to update yield models hourly, the traditional construct of a tapeout as a single decision point becomes analytically obsolete. The operational question is no longer whether to adopt AI-assisted workflows but how to restructure capital allocation, risk modeling, and supplier negotiations around probabilistic design convergence rather than fixed milestones.

Design Space Exploration at Machine Scale

Traditional EDA workflows treated design space exploration as a human-guided iterative process. Engineers defined constraints, ran simulations, reviewed results, adjusted parameters, and repeated. The bottleneck was always human decision latency. A senior ASIC architect might evaluate thirty design variants over three weeks. AI agents operating within modern EDA platforms now evaluate thirty thousand variants in seventy-two hours, each optimized across power, performance, area, and thermal envelopes simultaneously.

The economics are stark. A 5nm ASIC tapeout costs between $80 million and $150 million when mask sets, engineering resources, and opportunity cost are fully loaded. Every respin adds $30 million to $50 million and four to six months of delay. In markets where product windows are twelve to eighteen months, a respin often means missing the revenue cycle entirely. AI-assisted design space exploration compresses the risk by surfacing non-obvious trade-offs early. One hyperscaler designing custom silicon for inference workloads reported that agentic design tools identified a cache hierarchy configuration that improved latency by 14 percent while reducing die area by 9 percent, a combination human architects had not considered because it violated conventional design heuristics around memory locality.

The infrastructure enabling this shift is distributed ledger systems that create immutable design provenance records. When hundreds of agents propose modifications to a reference design, tracking which changes were tested under which constraints and how they propagated through downstream verification becomes a governance problem. Ledger-based design databases ensure that every parameter modification, simulation result, and corner case analysis is timestamped, attributed, and cryptographically linked to its antecedents. This is not theoretical. Two foundries now require fabless customers to submit ledger-anchored design packages for advanced node tapeouts to streamline liability and IP ownership disputes when post-silicon issues arise.

Digital Twins and the Industrialization of Yield Forecasting

Yield forecasting has historically been a blend of process engineering intuition, statistical sampling from pilot runs, and conservatism. A fab might project 60 percent yield at production ramp based on historical data from similar nodes, then adjust as defect density data accumulates. Digital twins invert this model. By ingesting real-time telemetry from lithography tools, etch chambers, deposition systems, and metrology instruments, digital twin platforms build physics-informed models of how process variation propagates through each mask layer. These models update continuously as wafers move through fabrication.

The operational impact is measurable. A leading foundry deployed a digital twin system across its 3nm production line in late 2025. By February 2026, the twin had reduced yield prediction error from ±12 percent to ±3 percent at the wafer lot level. More importantly, it identified a correlation between edge bead removal uniformity in the photoresist coating step and via resistance variation seven layers downstream. Human process engineers had not connected these phenomena because they occurred in separate modules operated by different teams under different KPIs. The twin flagged the correlation, engineers adjusted coating tool parameters, and via defect density dropped 40 percent within three weeks.

The capital implication is significant. Foundries allocate capacity based on yield assumptions. A 10 percent yield improvement at a fully loaded fab is equivalent to adding a fractional fab without the $15 billion capital outlay and thirty-month construction cycle. Digital twins enable fabs to monetize existing assets more efficiently and give fabless customers higher confidence in supply commitments. This is why foundry allocation agreements in 2026 increasingly include yield guarantees tied to digital twin model predictions rather than static percentages.

Autonomous Packaging Assembly Lines and the New Bottleneck

Advanced packaging has become the critical path for AI accelerators. Chiplet integration, heterogeneous 3D stacking, and high-bandwidth memory interfaces introduce mechanical, thermal, and electrical complexity that rivals the silicon itself. Traditional packaging lines relied on manual inspection, sampling-based quality control, and reactive rework. Defect rates for advanced packages routinely exceeded 8 percent, and diagnosing failures required destructive cross-sectioning and weeks of failure analysis.

AI-driven vision systems and agentic control loops have transformed this bottleneck into a competitive advantage for early movers. Autonomous packaging lines now use inline optical coherence tomography and X-ray laminography to inspect every substrate, every micro-bump, and every underfill void in real time. Machine learning models trained on millions of failure modes predict which units will fail electrical test before they reach the test socket. Suspected defects are flagged for rework or scrap immediately, reducing cycle time and preventing bad units from consuming downstream capacity.

One OSAT reported that deploying agentic quality control across its chiplet packaging line reduced package-level defect escape rate from 4.2 percent to 0.7 percent and cut failure analysis turnaround time from eleven days to nineteen hours. The financial leverage is direct. For a high-margin AI accelerator chip priced at $15,000 per unit, every percentage point improvement in package yield translates to millions in recovered revenue per production batch. Equally important, faster failure diagnosis accelerates the feedback loop to design teams, enabling mask revisions or assembly process tweaks that prevent defects from propagating across subsequent wafer lots.

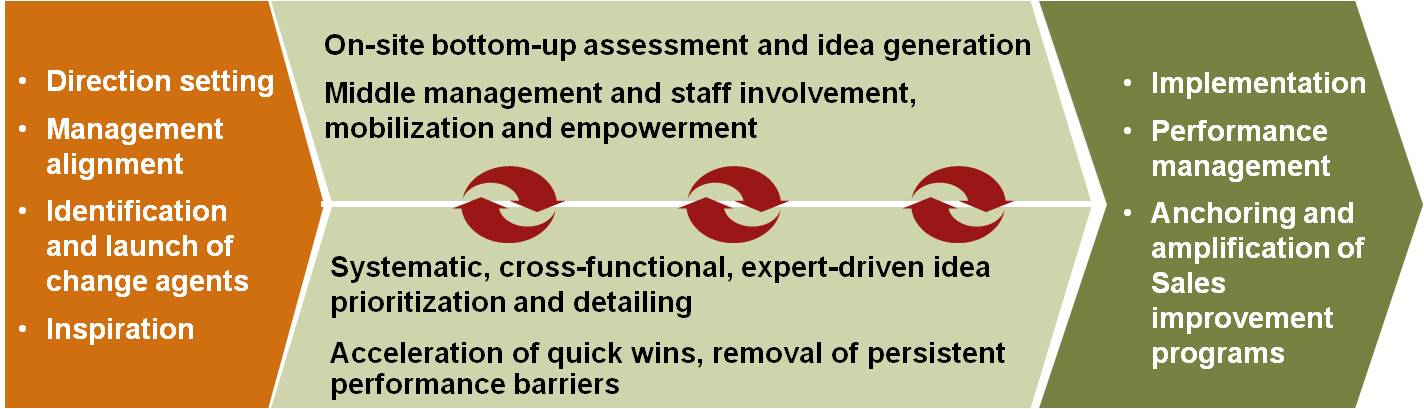

Capital Allocation Under Probabilistic Design Convergence

The transition from deterministic tapeout schedules to probabilistic convergence models requires finance and engineering organizations to align around new primitives. CFOs accustomed to stage-gate funding releases tied to milestones must now approve budget tranches based on confidence thresholds derived from AI agent exploration metrics. Instead of asking "Did we complete physical design?" the question becomes "What is the probability that we achieve target frequency at target power within target area given current design state?"

This is not a semantic shift. It changes how semiconductor firms structure R&D budgets, negotiate foundry prepayments, and manage investor guidance. A fabless firm running fifty design variants in parallel with AI agents can model the probability distribution of tapeout timing and allocate foundry capacity accordingly. If the 80th percentile tapeout date is Q3 2026 and the 95th percentile is Q4 2026, the firm can negotiate conditional capacity reservations with penalty clauses that reflect probabilistic delivery. Foundries benefit because they can oversubscribe capacity more safely when customer commitments are probabilistic rather than binary.

The firms capturing this advantage are embedding AI agent outputs directly into their financial planning systems. One top-ten fabless semiconductor company now generates weekly Monte Carlo simulations of project portfolio outcomes based on design agent progress metrics, digital twin yield forecasts, and packaging line throughput models. The executive team reviews these distributions in monthly operating reviews, adjusting headcount, foundry commitments, and customer delivery promises in response to shifting probability curves rather than waiting for binary milestone hits or misses.

What to Do Next Quarter

Semiconductor executives should take three specific actions before Q3 2026. First, audit your current EDA toolchain to identify which design exploration tasks can be delegated to AI agents and establish baseline metrics for cycle time, design variant coverage, and first-pass success rate so you can measure improvement objectively. Second, initiate a pilot digital twin deployment on one production line or packaging cell, ensuring that real-time sensor integration and model update cadence are defined contractually with your foundry or OSAT partner, not treated as aspirational features. Third, convene your finance and engineering leadership to prototype a probabilistic project planning framework for at least one next-generation product, modeling tapeout timing and yield as distributions rather than point estimates and linking funding tranches to confidence intervals rather than stage gates. These moves are not research projects. They are operational imperatives that determine whether you control your tapeout schedule or it controls you.