The Infrastructure Inversion Nobody Predicted

TSMC disclosed in its March 2026 investor update that twenty-three percent of its N3E design wins now pass through what it calls "agent-mediated design closure" before final tapeout. Samsung Foundry reported similar figures in February. This is not a pilot program. The world's two largest contract manufacturers have quietly reclassified their electronic design automation environments from software toolchains into inference infrastructure, where AI agents negotiate power-performance-area tradeoffs autonomously across millions of design permutations per hour. The implications extend far beyond faster chips. When your EDA stack becomes a runtime for autonomous optimization, the entire value chain from IP licensing to mask production reorients around who controls the agentic layer. Foundries that treated EDA as purchased software now find themselves building proprietary agent orchestration platforms, while fabless designers who once owned the full design stack are ceding architectural decisions to systems they observe but do not command.

The semiconductor industry has spent three decades optimizing a sequential handoff model: architects specify, designers implement, verification teams check, foundries manufacture. That assembly line is collapsing. AI agents now operate across all four domains simultaneously, proposing architectural modifications based on real-time yield data from fabs that have not yet produced the chip in question. This is not speculative. It is measurable in tapeout cycle compression, defect-per-wafer reduction, and the capital reallocation decisions being made this quarter by every major player.

Digital Twins That Generate Their Own Training Data

The traditional semiconductor digital twin modeled a fabrication process as a static physics simulation. You fed it process parameters, it returned predicted outcomes, engineers adjusted recipes accordingly. The 2026 generation does something categorically different: it instruments the physical fab with sufficient sensor density that the twin can propose experiments, observe outcomes, and retrain its own models without human hypothesis formation. Intel's Fab 34 in Ireland now runs what it terms "autonomous recipe exploration" on seven percent of its CoWoS-equivalent advanced packaging lines. The system proposes thermal profile variations, executes them on dedicated test wafers, measures results via inline metrology, and updates its thermal-stress prediction models before the next lot arrives.

This closes a loop that has been open since the industry began: the gap between simulated and actual process performance. Historically, that gap required armies of process integration engineers to characterize, model, and compensate. The economic constraint was not compute cost but the latency of human-in-the-loop learning. By removing that latency, digital twins transition from analysis tools to autonomous process controllers. GlobalFoundries reported in January that its 12nm FinFET line in Malta reduced defect density by nineteen percent over six months using agent-driven process tuning, with zero additional capital expenditure on lithography or deposition equipment. The yield improvement came entirely from faster iteration through the parameter space than human teams could achieve.

The strategic tension emerges when these twins begin sharing learned parameters across fabs. A twin that optimizes etch uniformity in Arizona can export its learned compensation strategies to a Singapore facility facing similar plasma chemistry challenges. Foundries are now deciding whether to treat twin-generated process knowledge as proprietary competitive advantage or as a shared resource within consortia. TSMC and Samsung have both filed patents in Q1 2026 on methods for federated learning across geographically distributed fab twins, suggesting they view cross-site learning as a moat worth defending. Meanwhile, the SEMI Digital Twin Standards Working Group is attempting to establish interoperability protocols before proprietary implementations fragment the ecosystem irreversibly.

Agentic EDA and the Tapeout Collapse

Synopsys and Cadence have both released what they now call "agentic EDA suites" in the past eight months. These are not incremental updates to Fusion Compiler or Innovus. They are runtime environments where multiple specialized agents—one focused on timing closure, another on IR drop mitigation, a third on DFM rule adherence—negotiate design modifications in parallel rather than sequentially. The result is a compression of what used to be twelve-week tapeout preparation cycles into four weeks for complex AI accelerator ASICs. Broadcom confirmed in February that its latest hyperscaler AI chip, targeting a mid-2026 production start, went from RTL freeze to tapeout-ready GDS in twenty-nine days, a timeline it attributed entirely to agent-mediated design closure.

The economic impact is nonlinear. A thirty-percent reduction in tapeout time does not merely accelerate revenue recognition. It fundamentally alters the risk profile of leading-edge design starts. When the cost of a respin drops from eight months and fourteen million dollars to ten weeks and four million, design teams can afford to take architectural risks they previously avoided. This shifts competitive dynamics toward teams that can iterate fastest, not those with the largest initial R&D budgets. Startups designing custom AI inference chips are now competitive on time-to-market with established players, provided they have access to the same agentic EDA infrastructure.

The challenge is that infrastructure access is not democratizing—it is re-centralizing. TSMC offers its agent-mediated design closure only to customers tapeout on N3E or more advanced nodes, and only through its Grand Alliance program. Samsung provides similar capabilities but requires design teams to use its proprietary Samsung Advanced Foundry Ecosystem tools, which lock in future manufacturing commitments. The EDA vendors find themselves in a precarious position: their software is becoming middleware in a stack controlled by foundries, who increasingly view the agentic layer as a manufacturing differentiator rather than a neutral design utility.

Distributed Ledger as Design Provenance Infrastructure

The agentic EDA model introduces a verification crisis. When an AI agent proposes a floorplan modification that improves power efficiency by three percent, which entity owns that intellectual property? The fabless design house that initiated the tapeout? The foundry whose agent made the suggestion? The EDA vendor whose platform hosted the agent? This is not an academic question. Arm filed suit against an unnamed foundry partner in March 2026 alleging that agent-generated optimizations to a licensed CPU core constituted derivative works that violated its architecture license terms. The case will likely define IP boundaries for a generation.

Distributed ledger infrastructure is emerging as the solution, not for its decentralization properties but for its tamper-evident audit trails. Applied Materials and Siemens EDA jointly announced in February a blockchain-based design provenance system that logs every agent-initiated design modification with cryptographic attribution to the requesting party, the executing agent, and the underlying model version. The goal is not to eliminate disputes but to make them adjudicable. When every design decision is logged with nanosecond precision and cryptographic integrity, courts can determine whether a particular optimization was derived from proprietary foundry process knowledge or from publicly available design rules.

The system also enables new commercial models. A foundry could offer discounted wafer pricing in exchange for rights to generalize learnings from a customer's agent-optimized design, with the ledger providing transparent accounting of which optimizations were customer-originated versus foundry-contributed. Early adoption is concentrated among hyperscalers designing their own AI chips, who have the legal infrastructure to negotiate such terms. Smaller fabless players are waiting for industry-standard terms to emerge, which the GSA is attempting to draft but has not yet published.

The Capital Reallocation Already Underway

The executives making decisions this quarter are not waiting for standards bodies or litigation outcomes. They are reallocating capital from process R&D toward inference infrastructure. Intel announced in January a four-hundred-million-dollar investment in on-premises GPU clusters dedicated to training fab digital twins and EDA agents, funded by a corresponding reduction in its advanced packaging equipment budget. The company is explicitly betting that agent-driven process optimization will yield more performance improvement per dollar than new capital equipment.

Similarly, Qualcomm disclosed in its Q1 2026 earnings that it is shifting twelve percent of its design engineering headcount from manual verification tasks to agent supervision and training data curation. The company stated plainly that agents now close timing on eighty-six percent of its blocks without human intervention, and the bottleneck is ensuring agents learn from the fourteen percent of cases that still require expert judgment. This is a workforce transformation already in motion, not a five-year roadmap.

Foundries are making parallel moves. TSMC's capex guidance for 2026 included a previously undisclosed line item of six hundred million dollars for "AI infrastructure supporting design and manufacturing convergence," separate from its traditional process development spending. Samsung's foundry division is reportedly hiring more machine learning engineers than process integration engineers for the first time in company history. These are not hedges. They are primary strategies based on observed returns from deployed systems.

What to Do Next Quarter

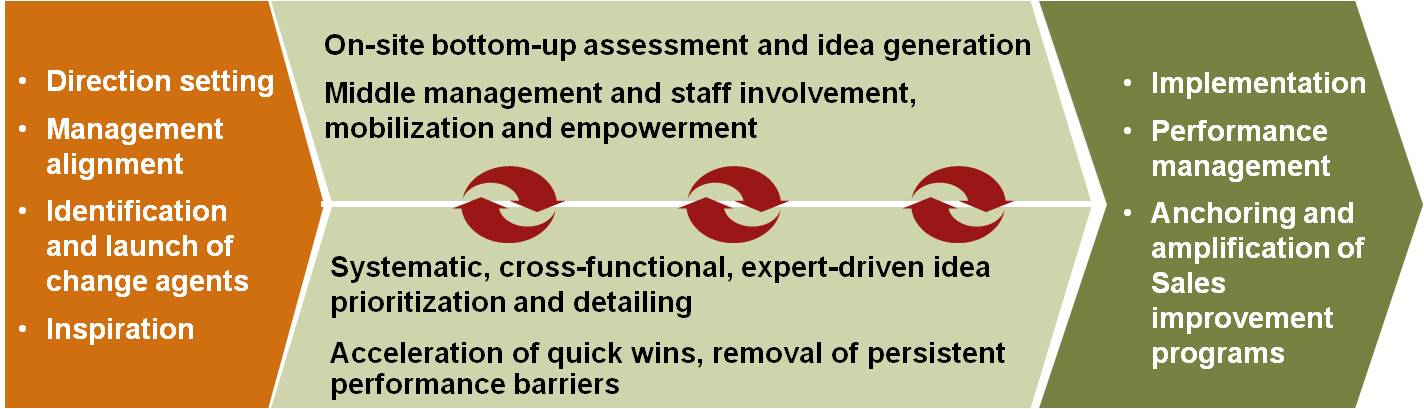

If you lead design engineering, establish a formal agent oversight framework now, before your first agent-generated tapeout reaches production. This means defining which design decisions require human approval, how to log agent rationale for post-tapeout review, and what metrics determine whether an agent's suggestions are improving or degrading design quality. Broadcom's approach is instructive: it requires agents to generate human-readable justifications for any change affecting more than five percent of a block's area, power, or timing, and maintains a parallel human-executed baseline for the first three tapeouts to validate agent performance. If you run foundry operations, treat your digital twin as a first-class product, not a simulation tool. That means staffing it with productization resources, establishing API contracts for customer access, and deciding now whether it will be a proprietary advantage or a platform you expose to ecosystem partners. The federated learning decision cannot wait another quarter; first movers will define the standards. If you control semiconductor capital allocation, model the ROI of inference infrastructure against traditional process equipment with the same rigor you apply to lithography purchases. Intel's four-hundred-million-dollar GPU investment is not a rounding error; it is a statement that agent-driven optimization has crossed the threshold from experiment to core capability. Your competitors are running that same calculation, and the answers are changing quickly enough that last year's assumptions no longer hold.