The Pattern No One Is Naming

In the past eleven months, three separate engineering initiatives inside Verizon's network operations group quietly merged into a single platform team. AT&T dissolved its dedicated content moderation AI unit and folded those capabilities into what it now calls its Universal Network Intelligence layer. Deutsche Telekom AG reported in its Q1 2026 earnings that it reduced operational AI models from forty-seven to nine while simultaneously improving latency-sensitive decision accuracy by eighteen percent. The pattern is not about doing more with AI. It is about collapsing decades of siloed operational systems into single autonomous control planes that make millisecond decisions across what were previously isolated domains: radio access network optimization, subscriber behavior prediction, content policy enforcement, ad inventory allocation, and threat detection. The economic forcing function is not innovation theater. It is the fact that running separate AI inference stacks for each operational domain now costs major TMT operators between $340 million and $890 million annually in redundant compute, duplicate data pipelines, and coordination overhead that scales exponentially with traffic growth.

The control plane is not a metaphor. It is a specific architectural layer that separates decision-making logic from the infrastructure executing those decisions. In traditional telecom networks, the control plane managed call routing and session establishment. In cloud infrastructure, it orchestrates workload placement and resource allocation. What is emerging in 2026 is a unified autonomous control plane that treats 5G radio resource allocation, real-time bidding for ad impressions, content toxicity scoring, and DDoS mitigation as variants of the same computational problem: contextual resource assignment under latency and capacity constraints with adversarial inputs. The operators building these systems are not research labs. They are production engineering teams under mandate to cut operational expenditure by fifteen to twenty-three percent while absorbing forty to sixty percent annual traffic growth and meeting increasingly granular SLA commitments that now specify per-user experience guarantees rather than aggregate network performance.

The Economics That Make This Inevitable

The capital expenditure required to operate separate AI inference infrastructure for each operational domain is colliding with three simultaneous cost pressures. First, GPU and TPU lease rates for frontier model inference rose twenty-two percent between January 2025 and March 2026 as hyperscaler capacity allocations tightened. Second, the energy cost to run distributed inference at the edge for latency-sensitive decisions now represents nine to fourteen percent of total network operating expense for Tier 1 carriers, up from four to six percent in 2024. Third, regulatory requirements in the EU AI Act and emerging Federal Communications Commission guidelines on algorithmic transparency require explainability and audit trails that demand shared observability infrastructure rather than per-model logging systems.

Comcast reported in February 2026 that consolidating its advertising optimization, content recommendation, and network capacity planning onto a shared inference substrate reduced total cost of ownership by thirty-one percent while cutting mean time to deploy new decision logic from forty-two days to six days. The performance gain comes from eliminating data movement. In legacy architectures, the same subscriber session data—location, device type, current bandwidth consumption, content preferences, ad interaction history—gets copied and transformed across multiple systems. Each copy introduces latency, storage cost, and synchronization risk. A unified control plane maintains a single authoritative real-time representation of network and user state, updated at sub-second intervals through distributed ledger synchronization or gossip protocols depending on consistency requirements.

The distributed ledger component is not blockchain marketing. It is Merkle-tree based state reconciliation across geographically distributed edge nodes that need to make autonomous decisions without round-tripping to centralized databases. When a 5G base station needs to decide whether to allocate additional spectrum to a user streaming 4K video who is also a high-value advertising target currently in a retail location, that decision happens in seven to twelve milliseconds. The control plane aggregates subscriber lifetime value models, real-time inventory availability from ad exchanges, current radio congestion levels, and content delivery network cache status. These inputs arrive from systems that historically could not share state without complex ETL pipelines and multi-second latencies. Distributed ledger infrastructure provides cryptographically verified state synchronization with sub-100-millisecond consistency guarantees across edge nodes that may be operating in degraded connectivity conditions.

The Architectural Inflection

The technical architecture emerging as the de facto standard separates three layers. The perception layer runs specialized models for domain-specific feature extraction: radio signal processing for 5G optimization, computer vision and NLP for content moderation, behavioral embedding models for subscriber analytics. The reasoning layer is a multi-agent orchestration system where specialized agents—each governing a bounded operational domain—negotiate resource allocation, enforce policy constraints, and execute coordinated decisions. The execution layer translates decisions into infrastructure commands: radio resource block assignments, content delivery network cache invalidation, ad server bid responses, firewall rule updates.

What makes this different from previous attempts at unified OSS/BSS platforms is that the reasoning layer uses agentic architectures rather than rule engines or monolithic models. An agent responsible for spectrum allocation does not merely optimize radio efficiency. It queries agents responsible for subscriber revenue forecasting and content delivery cost to understand the business value of allocating additional bandwidth to a specific user session. An agent managing content moderation does not just score toxicity. It coordinates with advertising yield management to understand the revenue impact of delaying or removing content from high-traffic inventory. These negotiations happen through structured inter-agent communication protocols—typically gRPC or GraphQL APIs with strongly typed schemas—mediated by a consensus mechanism that resolves conflicts according to operator-defined priority hierarchies.

The distributed ledger serves as the shared memory substrate. Each state update—a user moving between cell towers, an ad impression served, a content policy violation detected—gets written as a transaction to a local ledger node. Merkle proofs propagate to other nodes, enabling eventual consistency for non-latency-critical decisions and immediate consistency for decisions that require global coordination. This architecture allows edge nodes to operate autonomously during network partitions while maintaining auditability and the ability to rollback decisions when errors are detected. For TMT operators subject to regulatory scrutiny, the immutable decision log is not a feature add-on. It is the compliance foundation for demonstrating that algorithmic decisions meet transparency and non-discrimination requirements.

The Talent and Vendor Reality

The constraint is not technology availability. It is the scarcity of engineering talent that understands telecommunications protocols, distributed systems consensus algorithms, and agent-based AI simultaneously. A 2026 survey of North American and European Tier 1 carriers found that seventy-three percent identified "platform engineers with cross-domain TMT and AI infrastructure expertise" as their top hiring constraint, ahead of data scientists or ML researchers. The day rate for contract engineers who can design control plane architectures integrating 3GPP standards compliance with multi-agent orchestration systems now ranges from $2,100 to $3,400 in major markets.

The vendor landscape is fragmenting between hyperscalers offering managed AI platforms that lack telecommunications-specific capabilities and traditional network equipment providers adding AI features to legacy OSS products without fundamental architectural rethinking. The gap is being filled by a new category of infrastructure software providers building control plane substrates specifically for TMT operators. These platforms provide agent orchestration frameworks with pre-built integrations to telecommunications protocols, distributed ledger synchronization optimized for edge network topologies, and compliance observability tooling that maps agent decisions to regulatory requirements. Adoption is concentrated among operators making early bets: Telefónica's UNICA platform, Vodafone's network AI initiatives, and SK Telecom's autonomous network programs all reference control plane consolidation as explicit architectural goals in their 2026 technical roadmaps.

The risk is vendor lock-in at the control plane layer, which becomes the most critical infrastructure component. Operators are responding by demanding open APIs and insisting on multi-cloud deployment models where the control plane can orchestrate resources across on-premises edge infrastructure, hyperscaler regions, and specialized AI compute providers. The operational complexity is substantial, but the alternative—maintaining fragmented systems with exponentially growing coordination overhead—is economically untenable at current traffic growth rates.

What to Do Next Quarter

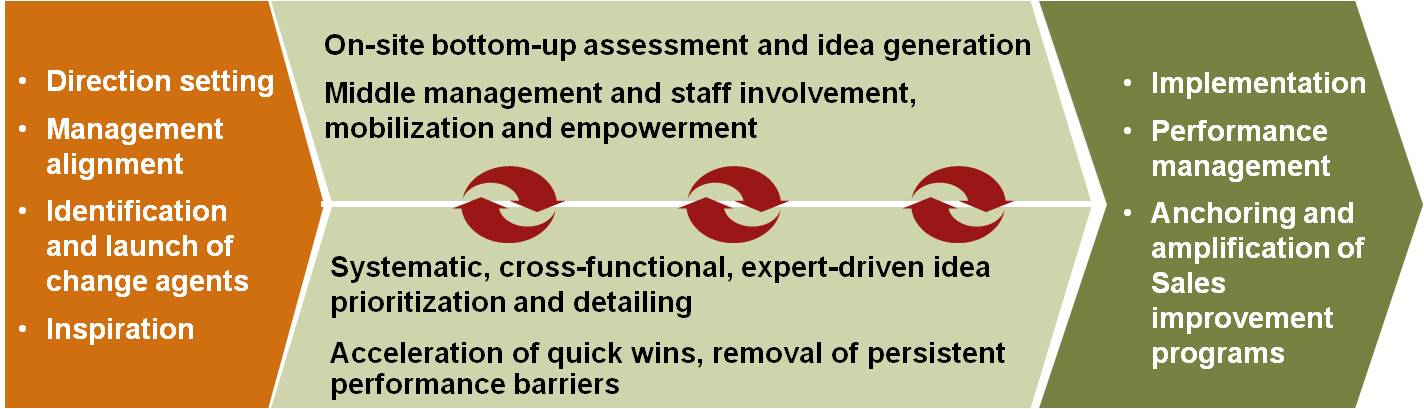

For TMT executives evaluating this transition, three moves have disproportionate impact in the next ninety days. First, conduct a total cost of ownership audit across all production AI inference workloads, specifically measuring data movement costs and duplicate feature engineering pipelines. The majority of operators discover that thirty to fifty percent of inference compute is redundant preprocessing that could be eliminated with shared feature stores and unified state representation. Second, identify one high-value operational domain where decision latency and coordination overhead are measurable constraints—typically either real-time advertising optimization or radio resource allocation—and prototype a control plane architecture that consolidates related decision systems. The goal is not full production deployment but proving that shared state and agent-based coordination reduce both cost and latency compared to current integration patterns. Third, establish a cross-functional architecture team with representatives from network operations, platform engineering, data science, and compliance, tasked with defining control plane API contracts and inter-agent communication protocols. The absence of shared vocabulary and interface standards is the primary barrier to moving from pilot projects to production rollout. These are not innovation initiatives. They are operational necessities for maintaining unit economics as traffic scales and regulatory requirements expand.